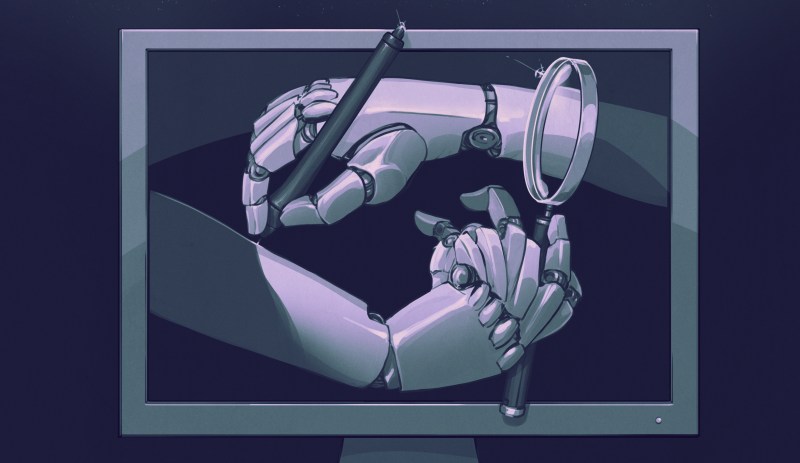

Why Model Collapse in LLMs is Inevitable With Self-Learning

There is a persistent belief in the ‘AI’ community that large language models (LLMs) have the ability to learn and self-improve by tweaking the weights in their vector space. Although …read more Continue reading Why Model Collapse in LLMs is Inevitable With Self-Learning