XKCD, Interstellar Asteroid

Via the stellarly comic mind of Randall Munroe at XKCD.

Permalink

The post XKCD, Interstellar Asteroid appeared first on Security Boulevard.

Continue reading XKCD, Interstellar Asteroid

Collaborate Disseminate

Via the stellarly comic mind of Randall Munroe at XKCD.

Permalink

The post XKCD, Interstellar Asteroid appeared first on Security Boulevard.

Continue reading XKCD, Interstellar Asteroid

You can have data without information, but you cannot have information without data. ~Daniel Keys Moran

Here we resume our discussion of DFIR Redefined: Deeper Functionality for Investigators with R as begun in Part 1.First, now that my presentation se… Continue reading toolsmith #129 – DFIR Redefined: Deeper Functionality for Investigators with R – Part 2

You can have data without information, but you cannot have information without data. ~Daniel Keys Moran

Here we resume our discussion of DFIR Redefined: Deeper Functionality for Investigators with R as begun in Part 1.

First, now that my presentation season has wrapped up, I’ve posted the related material on the Github for this content. I’ve specifically posted the most recent version as presented at SecureWorld Seattle, which included Eric Kapfhammer‘s contributions and a bit of his forward thinking for next steps in this approach.

When we left off last month I parted company with you in the middle of an explanation of analysis of emotional valence, or the “the intrinsic attractiveness (positive valence) or averseness (negative valence) of an event, object, or situation”, using R and the Twitter API. It’s probably worth your time to go back and refresh with the end of Part 1. Our last discussion point was specific to the popularity of negative tweets versus positive tweets with a cluster of emotionally neutral retweets, two positive retweets, and a load of negative retweets. This type of analysis can quickly give us better understanding of an attacker collective’s sentiment, particularly where the collective is vocal via social media. Teeing off the popularity of negative versus positive sentiment, we can assess the actual words fueling such sentiment analysis. It doesn’t take us much R code to achieve our goal using the apply family of functions. The likes of apply, lapply, and sapply allow you to manipulate slices of data from matrices, arrays, lists and data frames in a repetitive way without having to use loops. We use code here directly from Michael Levy, Social Scientist, and his Playing with Twitter Data post.

polWordTables =

sapply(pol, function(p) {

words = c(positiveWords = paste(p[[1]]$pos.words[[1]], collapse = ‘ ‘),

negativeWords = paste(p[[1]]$neg.words[[1]], collapse = ‘ ‘))

gsub(‘-‘, ”, words) # Get rid of nothing found’s “-“

}) %>%

apply(1, paste, collapse = ‘ ‘) %>%

stripWhitespace() %>%

strsplit(‘ ‘) %>%

sapply(table)

par(mfrow = c(1, 2))

invisible(

lapply(1:2, function(i) {

dotchart(sort(polWordTables[[i]]), cex = .5)

mtext(names(polWordTables)[i])

}))

The result is a tidy visual representation of exactly what we learned at the end of Part 1, results as noted in Figure 1.

| Figure 1: Positive vs negative words |

Content including words such as killed, dangerous, infected, and attacks are definitely more interesting to readers than words such as good and clean. Sentiment like this could definitely be used to assess potential attacker outcomes and behaviors just prior, or in the midst of an attack, particularly in DDoS scenarios. Couple sentiment analysis with the ability to visualize networks of retweets and mentions, and you could zoom in on potential leaders or organizers. The larger the network node, the more retweets, as seen in Figure 2.

|

| Figure 2: Who is retweeting who? |

Remember our initial premise, as described in Part 1, was that attacker groups often use associated hashtags and handles, and the minions that want to be “part of” often retweet and use the hashtag(s). Individual attackers either freely give themselves away, or often become easily identifiable or associated, via Twitter. Note that our dominant retweets are for @joe4security, @HackRead, @defendmalware (not actual attackers, but bloggers talking about attacks, used here for example’s sake). Figure 3 shows us who is mentioning who.

|

| Figure 3: Who is mentioning who? |

Note that @defendmalware mentions @HackRead. If these were actual attackers it would not be unreasonable to imagine a possible relationship between Twitter accounts that are actively retweeting and mentioning each other before or during an attack. Now let’s assume @HackRead might be a possible suspect and you’d like to learn a bit more about possible additional suspects. In reality @HackRead HQ is in Milan, Italy. Perhaps Milan then might be a location for other attackers. I can feed in Twittter handles from my retweet and mentions network above, query the Twitter API with very specific geocode, and lock it within five miles of the center of Milan.

The results are immediate per Figure 4.

|

| Figure 4: GeoLocation code and results |

Obviously, as these Twitter accounts aren’t actual attackers, their retweets aren’t actually pertinent to our presumed attack scenario, but they definitely retweeted @computerweekly (seen in retweets and mentions) from within five miles of the center of Milan. If @HackRead were the leader of an organization, and we believed that associates were assumed to be within geographical proximity, geolocation via the Twitter API could be quite useful. Again, these are all used as thematic examples, no actual attacks should be related to any of these accounts in any way.

Fast Frugal Trees (decision trees) for prioritizing criticality

With the abundance of data, and often subjective or biased analysis, there are occasions where a quick, authoritative decision can be quite beneficial. Fast-and-frugal trees (FFTs) to the rescue. FFTs are simple algorithms that facilitate efficient and accurate decisions based on limited information.

Nathaniel D. Phillips, PhD created FFTrees for R to allow anyone to easily create, visualize and evaluate FFTs. Malcolm Gladwell has said that “we are suspicious of rapid cognition. We live in a world that assumes that the quality of a decision is directly related to the time and effort that went into making it.” FFTs, and decision trees at large, counter that premise and aid in the timely, efficient processing of data with the intent of a quick but sound decision. As with so much of information security, there is often a direct correlation with medical, psychological, and social sciences, and the use of FFTs is no different. Often, predictive analysis is conducted with logistic regression, used to “describe data and to explain the relationship between one dependent binary variable and one or more nominal, ordinal, interval or ratio-level independent variables.” Would you prefer logistic regression or FFTs?

|

| Figure 5: Thanks, I’ll take FFTs |

Here’s a text book information security scenario, often rife with subjectivity and bias. After a breach, and subsequent third party risk assessment that generated a ton of CVSS data, make a fast decision about what treatments to apply first. Because everyone loves CVSS.

|

| Figure 6: CVSS meh |

Nothing like a massive table, scored by base, impact, exploitability, temporal, environmental, modified impact, and overall scores, all assessed by a third party assessor who may not fully understand the complexities or nuances of your environment. Let’s say our esteemed assessor has decided that there are 683 total findings, of which 444 are non-critical and 239 are critical. Will FFTrees agree? Nay! First, a wee bit of R code.

library(“FFTrees”)

cvss cvss.fft plot(cvss.fft, what = “cues”)

plot(cvss.fft,

main = “CVSS FFT”,

decision.names = c(“Non-Critical”, “Critical”))

Guess what, the model landed right on impact and exploitability as the most important inputs, and not just because it’s logically so, but because of their position when assessed for where they fall in the area under the curve (AUC), where the specific curve is the receiver operating characteristic (ROC). The ROC is a “graphical plot that illustrates the diagnostic ability of a binary classifier system as its discrimination threshold is varied.” As for the AUC, accuracy is measured by the area under the ROC curve where an area of 1 represents a perfect test and an area of .5 represents a worthless test. Simply, the closer to 1, the better. For this model and data, impact and exploitability are the most accurate as seen in Figure 7.

|

| Figure 7: Cue rankings prefer impact and exploitability |

The fast and frugal tree made its decision where impact and exploitability with scores equal or less than 2 were non-critical and exploitability greater than 2 was labeled critical, as seen in Figure 8.

|

| Figure 8: The FFT decides |

Ah hah! Our FFT sees things differently than our assessor. With a 93% average for performance fitting (this is good), our tree, making decisions on impact and exploitability, decides that there are 444 non-critical findings and 222 critical findings, a 17 point differential from our assessor. Can we all agree that mitigating and remediating critical findings can be an expensive proposition? If you, with just a modicum of data science, can make an authoritative decision that saves you time and money without adversely impacting your security posture, would you count it as a win? Yes, that was rhetorical.

->->

|

| Figure 9: User logon data |

With 210 line of R, including comments, log read, file output, and graphing we can visualize and alert on DARPA-549521’s data as seen in Figure 10.

| Figure 10: User behavior outside the confidence interval |

We can detect when a user’s account exhibits changes in their seasonality as it relates to a confidence interval established (learned) over time. In this case, on 27 AUG 2017, the user topped her threshold of 19 logons thus triggering an exception. Now imagine using this model to spot anomalous user behavior across all users and you get a good feel for the model’s power.

Eric points out that there are, of course, additional options for modeling including:

“To competently perform rectifying security service, two critical incident response elements are necessary: information and organization.” ~ Robert E. Davis

I’ve been presenting DFIR Redefined: Deeper Functionality for Investigators with R across the country at various conference venues and thought it would helpful to provide details for readers.

The basic premise?

Incident responders and investigators need all the help they can get.

Let me lay just a few statistics on you, from Secure360.org’s The Challenges of Incident Response, Nov 2016. Per their respondents in a survey of security professionals:

In short, according to Nathan Burke, “It’s just not mathematically possible for companies to hire a large enough staff to investigate tens of thousands of alerts per month, nor would it make sense.”

The 2017 SANS Incident Response Survey, compiled by Matt Bromiley in June, reminds us that “2016 brought unprecedented events that impacted the cyber security industry, including a myriad of events that raised issues with multiple nation-state attackers, a tumultuous election and numerous government investigations.” Further, “seemingly continuous leaks and data dumps brought new concerns about malware, privacy and government overreach to the surface.”

Finally, the survey shows that IR teams are:

To that end, what concepts and methods further enable handlers and investigators as they continue to strive for faster detection and containment? Data science and visualization sure can’t hurt. How can we be more creative to achieve “deeper functionality”? I propose a two-part series on Deeper Functionality for Investigators with R with the following DFIR Redefined scenarios:

R is “100% focused and built for statistical data analysis and visualization” and “makes it remarkably simple to run extensive statistical analysis on your data and then generate informative and appealing visualizations with just a few lines of code.”

With R you can interface with data via file ingestion, database connection, APIs and benefit from a wide range of packages and strong community investment.

From the Win-Vector Blog, per John Mount “not all R users consider themselves to be expert programmers (many are happy calling themselves analysts). R is often used in collaborative projects where there are varying levels of programming expertise.”

I propose that this represents the vast majority of us, we’re not expert programmers, data scientists, or statisticians. More likely, we’re security analysts re-using code for our own purposes, be it red team or blue team. With a very few lines of R investigators might be more quickly able to reach conclusions.

All the code described in the post can be found on my GitHub.

Have you been pwned?

This scenario I covered in an earlier post, I’ll refer you to Toolsmith Release Advisory: Steph Locke’s HIBPwned R package.

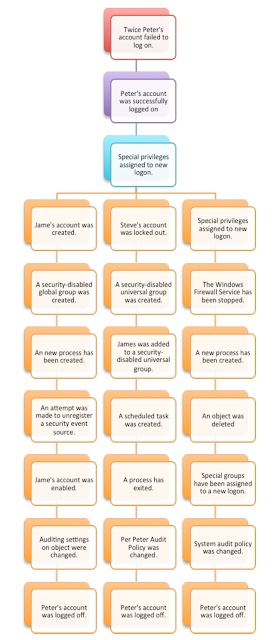

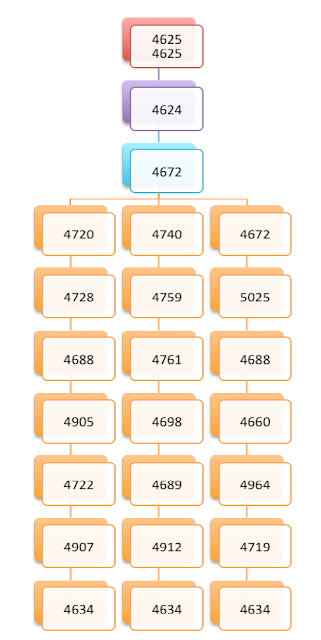

Visualization for malicious Windows Event Id sequences

|

| Figure 1 |

|

| Figure 2 |

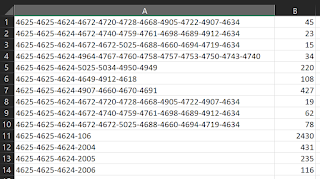

Taking related log data, parsing and counting it for visualization with R would look something like Figure 3.

|

| Figure 3 |

|

| Figure 5 |

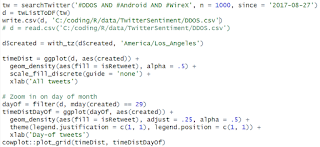

The result is a pair of graphs color coded by tweets and retweets per Figure 6.

|

| Figure 6 |

This gives you an immediate feels for spikes in interest by day as well as time of day, particularly with attention to retweets.

|

| Figure 7 |

The result in the scenario ironically indicates that the majority of related tweets using our hashtags of interest are coming from Androids per Figure 8. 🙂

|

| Figure 8 |

|

| Figure 9 |

Another line of questioning to consider: which tweets are more often retweeted, positive or negative? As you can imagine with information security focused topics, negativity wins the day.

Three lines of R…

ggplot(orig, aes(x = emotionalValence, y = retweetCount)) +

geom_point(position = ‘jitter’) +

geom_smooth()

…and we learn just how popular negative tweets are in Figure 10.

|

| Figure 10 |

There are cluster of emotionally neutral retweets, two positive retweets, and a load of negative retweets. This type of analysis can quickly lead to a good feel for the overall sentiment of an attacker collective, particularly one with less opsec and more desire for attention via social media.

In Part 2 of DFIR Redefined: Deeper Functionality for Investigators with R we’ll explore this scenario further via sentiment analysis and Twitter data, as well as Fast Frugal Trees (decision trees) for prioritizing criticality.

Let me know if you have any questions on the first part of this series via @holisticinfosec or russ at holisticinfosec dot org.

Cheers…until next time.

The post toolsmith #128 – DFIR Redefined: Deeper Functionality for Investigators with R – Part 1 appeared first on Security Boulevard.

“To competently perform rectifying security service, two critical incident response elements are necessary: information and organization.” ~ Robert E. DavisI’ve been presenting DFIR Redefined: Deeper Functionality for Investigators with R across t… Continue reading toolsmith #128 – DFIR Redefined: Deeper Functionality for Investigators with R – Part 1

Feedzai is announcing a $50 million Series C this morning led by an unnamed VC with additional capital from Sapphire Ventures. The six year old startup builds machine learning tools to help banks and merchants spot payment fraud. In today’s rapidly maturing world of fintech, Feedzai is trying to thread the needle between turnkey solution and customizable platform. With 60 clients… Read More

Feedzai is announcing a $50 million Series C this morning led by an unnamed VC with additional capital from Sapphire Ventures. The six year old startup builds machine learning tools to help banks and merchants spot payment fraud. In today’s rapidly maturing world of fintech, Feedzai is trying to thread the needle between turnkey solution and customizable platform. With 60 clients… Read More Continue reading Feedzai closes $50M Series C to help banks and merchants identify fraud with AI

NYU professor Allison Parrish describes a new frontier in marrying textual analysis and poetry. Continue reading How to Harness Data Science for Experimental Creative Writing

When Alteryx acquired Yhat in June, it was only a matter of time before the startup’s data-science management software began showing up in Alteryx. Just today, the company announced Alteryx Promote, a new tool based on Yhat’s product set.

When Alteryx acquired Yhat in June, it was only a matter of time before the startup’s data-science management software began showing up in Alteryx. Just today, the company announced Alteryx Promote, a new tool based on Yhat’s product set.

The company made the announcement at the Alteryx Inspire Europe customer event taking place in London this week.

Alteryx Promote gives data… Read More Continue reading Alteryx Promote puts data science to work across the company

Dataiku, a French startup that helps data analysts communicate with data scientists to build more meaningful data applications, announced a significant funding round today. The company scored a $28 million Series B investment led by Battery Ventures with help from FirstMark, Serena Capital and Alven. Today’s money brings the total raised to almost $45 million. Its most recent priot round… Read More

Dataiku, a French startup that helps data analysts communicate with data scientists to build more meaningful data applications, announced a significant funding round today. The company scored a $28 million Series B investment led by Battery Ventures with help from FirstMark, Serena Capital and Alven. Today’s money brings the total raised to almost $45 million. Its most recent priot round… Read More Continue reading Dataiku to enhance data tools with $28 million investment led by Battery Ventures

Imagine a pocket-sized hard drive capable of storing the entire list of 35 Million Songs?

This isn’t yet practical, but IBM has just taken a big step towards improving computing technology: IBM researchers just discovered a way to store data on a single atom.

Data storage is undergoing dramatic evolution, recently researchers successfully stored digital data — an entire operating system, a

Continue reading Scientists Store One Bit of Data on a Single Atom — Future of Data Storage